Ge Yang

I study robot learning and intelligence. I believe simulators powered by generative AI might offer a better route toward generalist robots than real-world data alone. I am also building a data foundry, vuer.ai, and developing closed-loop evaluation using high-fidelity digital twins via the Neverwhere project.

I am currently a postdoc with Phillip Isola at MIT CSAIL and work closely with Xiaolong Wang at UCSD. I am a recipient of the NSF IAIFI Postdoc Fellowship, and the Best Paper Award at The Conference of Robot Learning (CoRL) in 2023. I graduated from UChicago with a Ph.D. in Physics advised by David I. Schuster (now at Stanford), and Yale University with a B.S. in Mathematics and Physics.

🔥Joining My Lab 🔥- I am looking for students and full-time staff with strong work ethic and problem solving ability to join my lab, in two tracks: GenAI + Robotic, and Research Engineering.

As a member of the lab, you will be working towards publications at top-tier conferences, and contributing code to large scale production systems and open-source projects. To apply, please email me your transcripts, CV, and the duration of your availability.

I am currently on the faculty job market (2024 - 2025 cycle). Here is my statement: A Scalable Recipe for Robot Learning from Synthetic Data

News

- Mar 2024 Invited talk at UPenn GRASP Lab

- Oct 2024 Invited talk at OpenAI

- Oct 2024 Invited talk at Stanford REAL Lab

- Jan 2024 Invited talk at the California Institute of Technology, Vision Seminar

- Dec 2023 Invited talk at the University of Pennsylvania, GRASP Lab Seminar

- Oct 2023 Invited talk at ROPEM Workshop, IROS 2023

Guest lectures:

- Apr 2024 Guest lecture at the Boston University, Computer Vision Course

- Mar 2024 Guest lecture at the MIT, 9.357 Current Topics in Perception

- Nov 2023 Guest lecture at the University of Pennsylvania, Computer Graphics

- Nov 2023 Guest lecture at the MIT, 6.S980 ML for Inverse Graphics

- Apr 2022 Guest lecture at the MIT, 9.357 Current Topics in Perception

Research

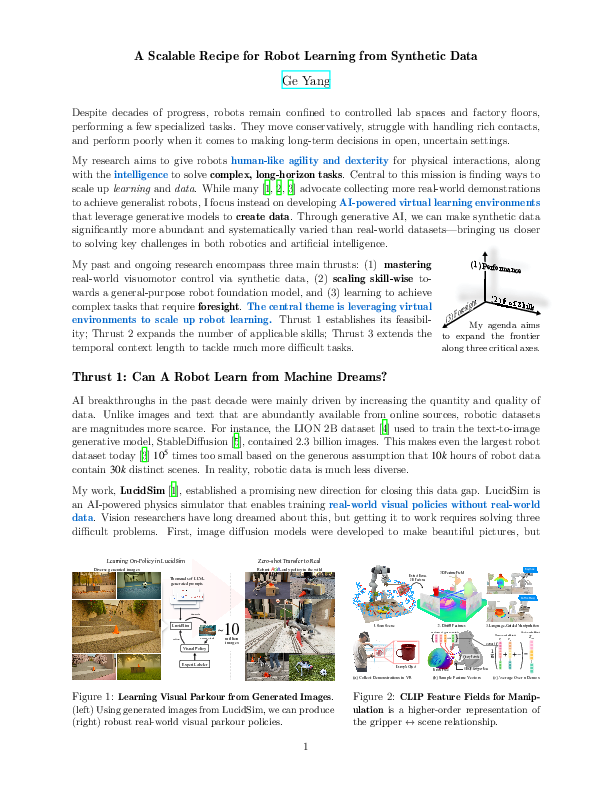

I want to give robots the visuomotor skills and decision-making capabilities typically associated with humans. Key to this vision is finding ways to scale up learning and data. My research clusters in three areas: (1) Learning real-world visual policies from synthetic data, (2) scaling up human data to enable language-guided open-set skill learning, and (3) learning to make long-term decisions.

Highlights

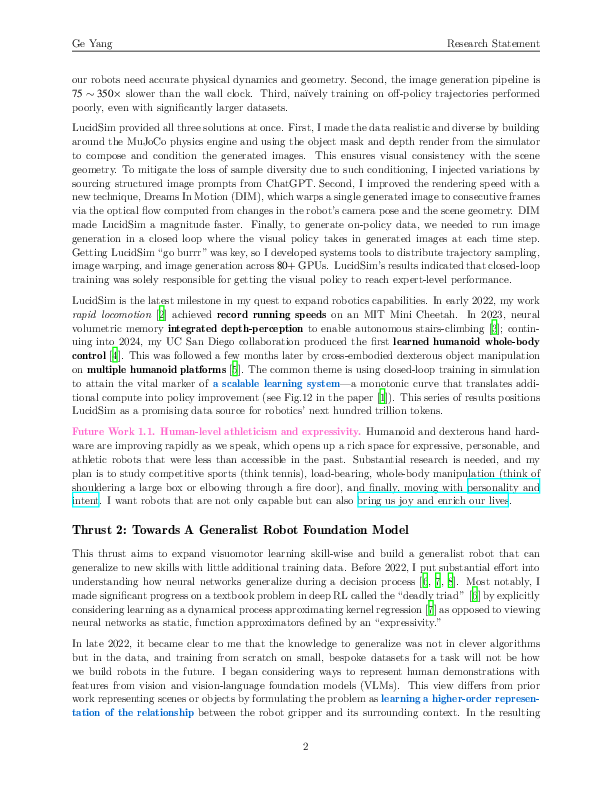

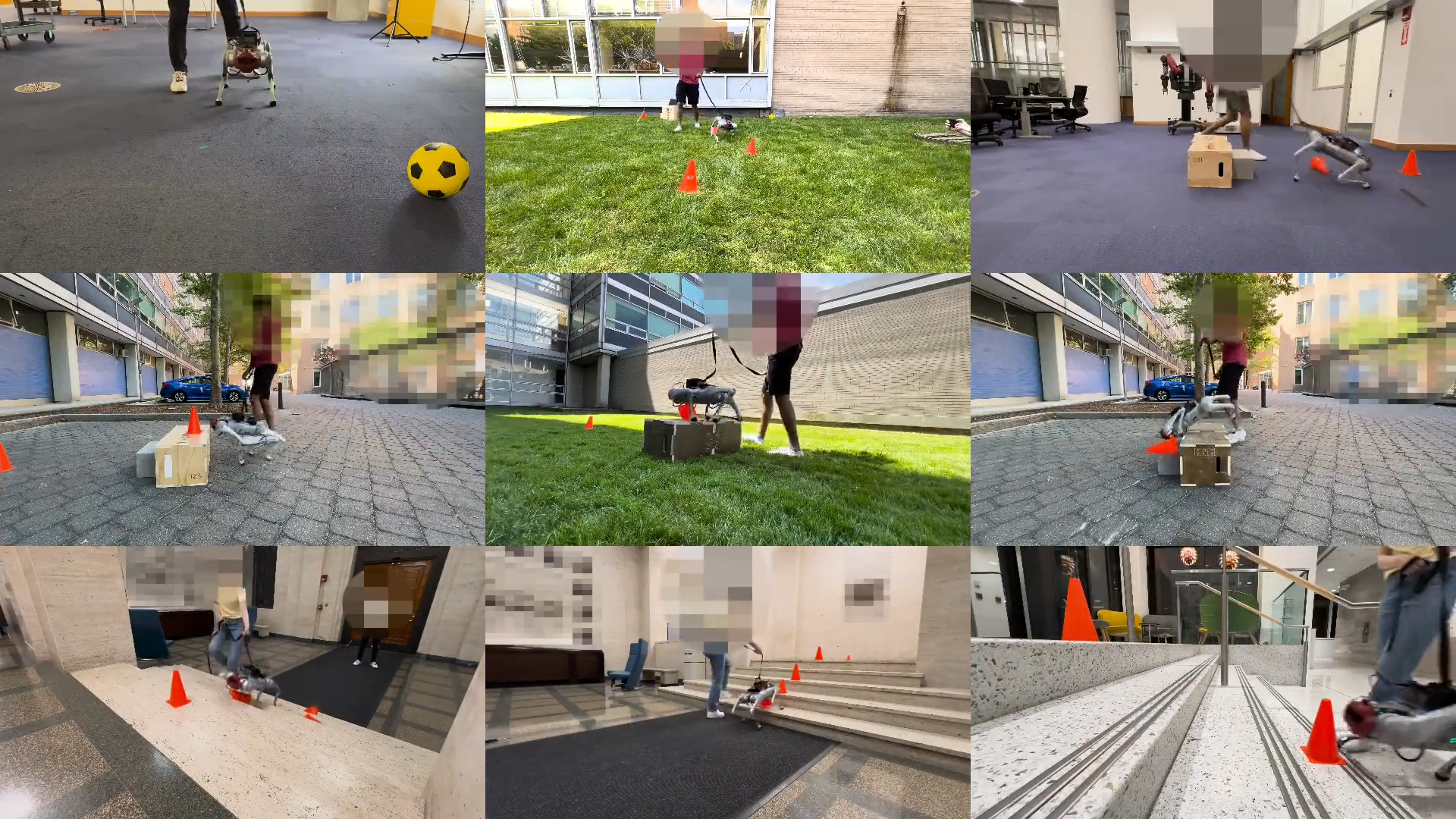

LucidSim: Learning Visual Parkour from Generated Images, CoRL 2024

Ge Yang*Alan Yu*Ran ChoiYajvan RavanJohn LeonardPhillip Isola

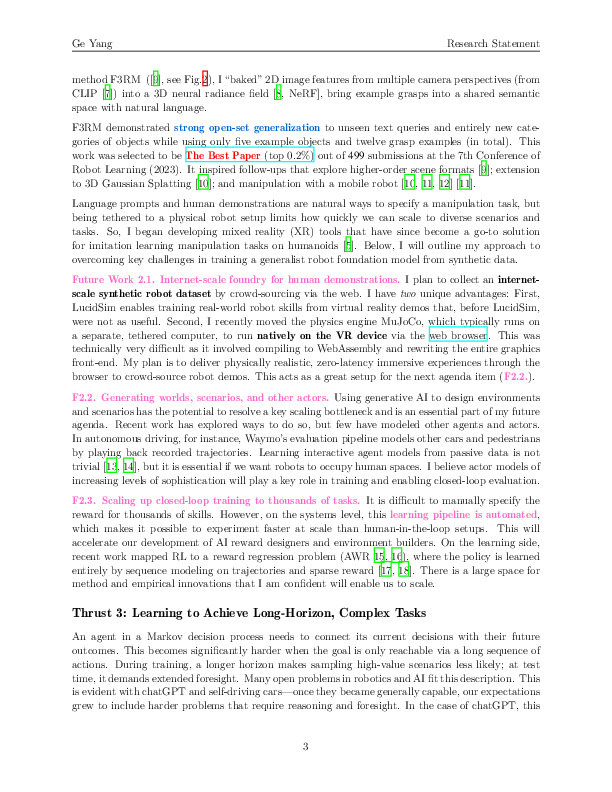

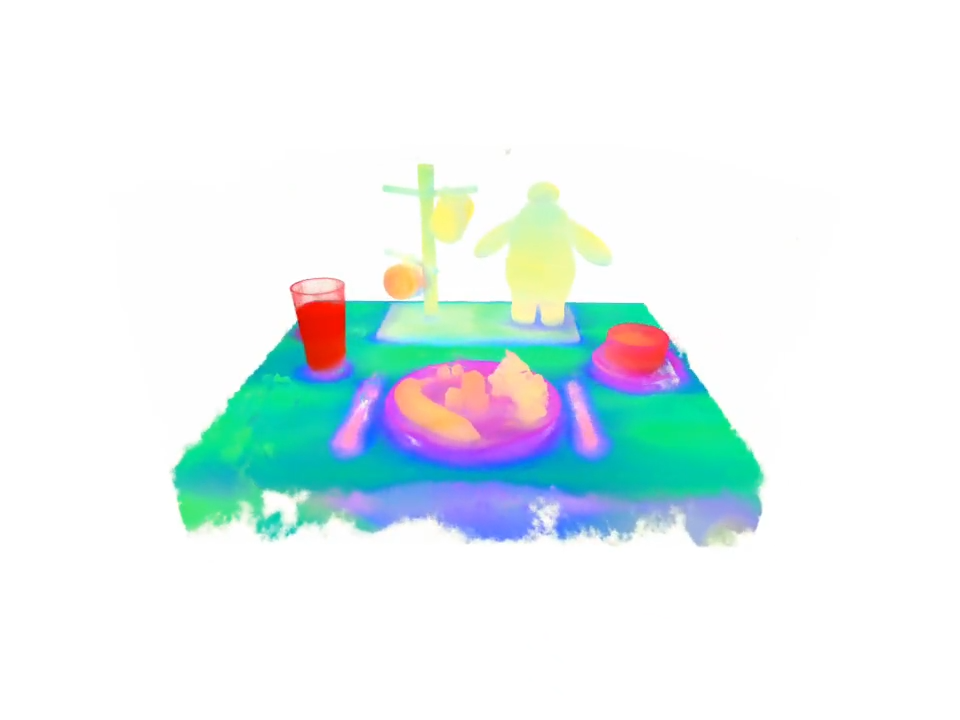

Distilled Feature Fields Enable Few-Shot Language-Guided Manipulation, CoRL 2023 Best Paper Award (top 0.2 %, 1/499)

Ge Yang*William Shen*Alan YuJansen WongLeslie KaelblingPhillip Isola

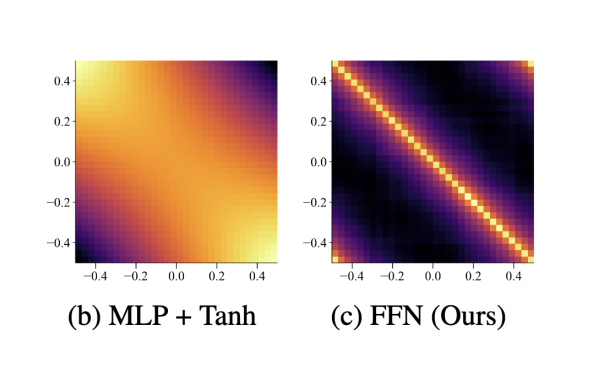

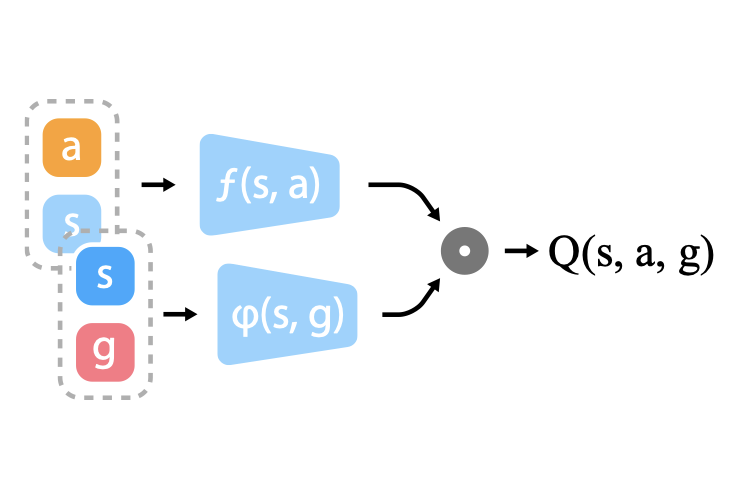

Overcoming The Spectral Bias of Neural Value Approximation, ICLR 2022

Ge Yang*Anurag Ajay*Pulkit Agarwal

Rapid Locomotion via Reinforcement Learning, RSS 2022

Ge Yang*Gabriel Margolis*Kartik PaigwarTao ChenPulkit AgrawalRobotics

Open-TeleVision: Teleoperation with Immersive Active Visual Feedback, CoRL 2024

Xuxin ChengJialong LiShiqi YangGe YangXiaolong Wang

Expressive Whole-Body Control for Humanoid Robots, RSS 2024

Xuxin ChengYandong JiJunming ChenRuihan YangGe YangXiaolong Wang

Neural Volumetric Memory for Visual Locomotion Control, CVPR 2023 highlight

Ruihan YangGe YangXiaolong WangMachine Learning

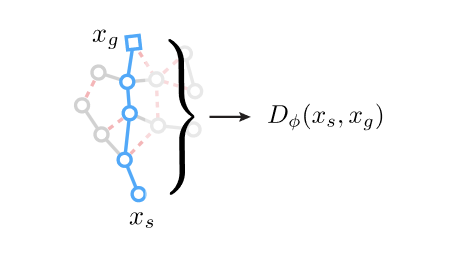

Plan2Vec: Unsupervised Representation Learning by Latent Plans, L4DC 2020

Ge Yang*Amy Zhang*Ari S. MorcosJoelle PineauPieter AbbeelRoberto Calandra

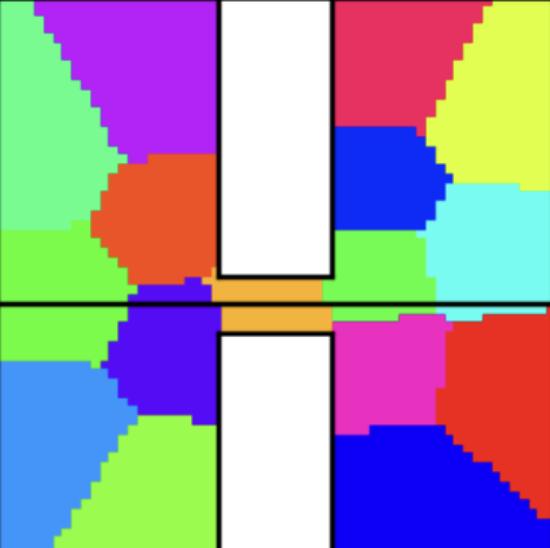

Learning Plannable Representations with Causal InfoGAN, ICML 2018

Thanard KurutachAviv TamarGe YangStuart J. RussellPieter Abbeel

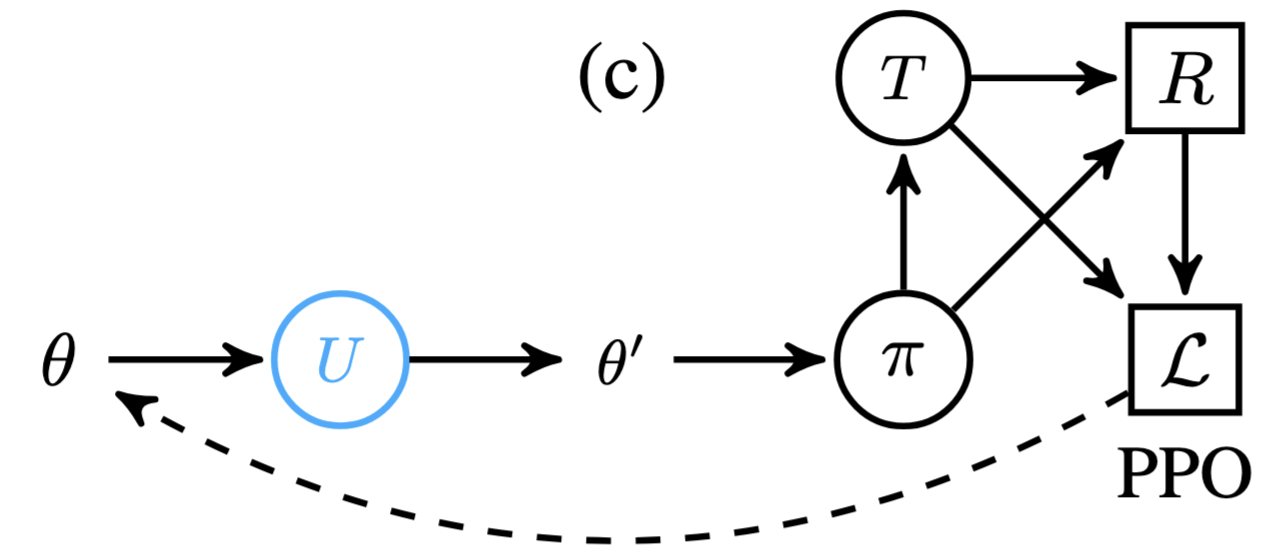

Some Considerations on Learning to Explore via Meta-Reinforcement Learning, ICLR 2018

Ge Yang*Bradly C. Stadie*Rein HouthooftXi ChenYan DuanYuhuai WuPieter AbbeelIlya SutskeverOthers

Feature Splatting: Language-Driven Physics-Based Scene Synthesis and Editing, ECCV 2024

Ge Yang*Ri-Zhao Qiu*Weijia ZengXiaolong Wang

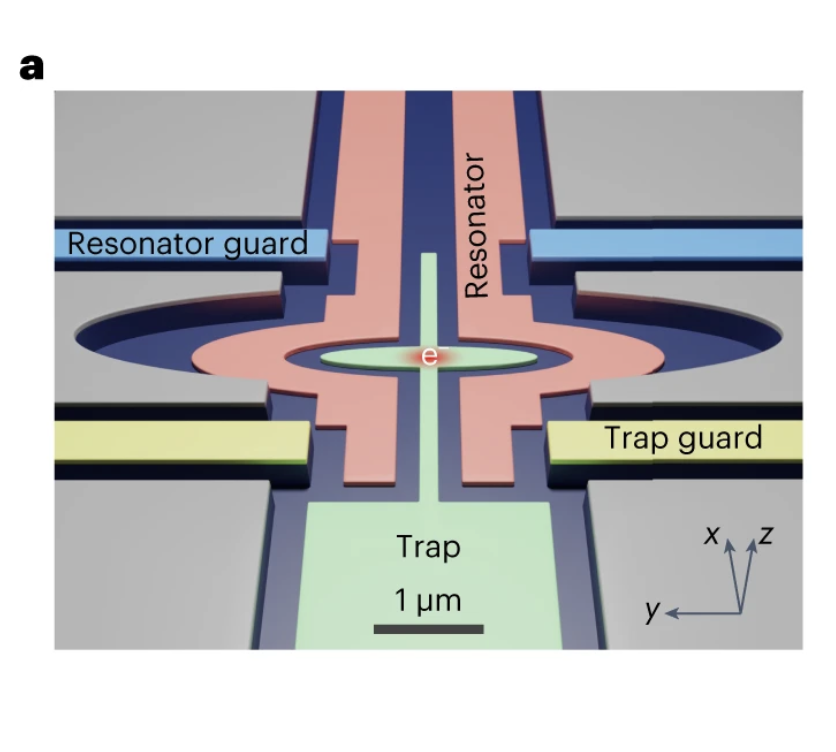

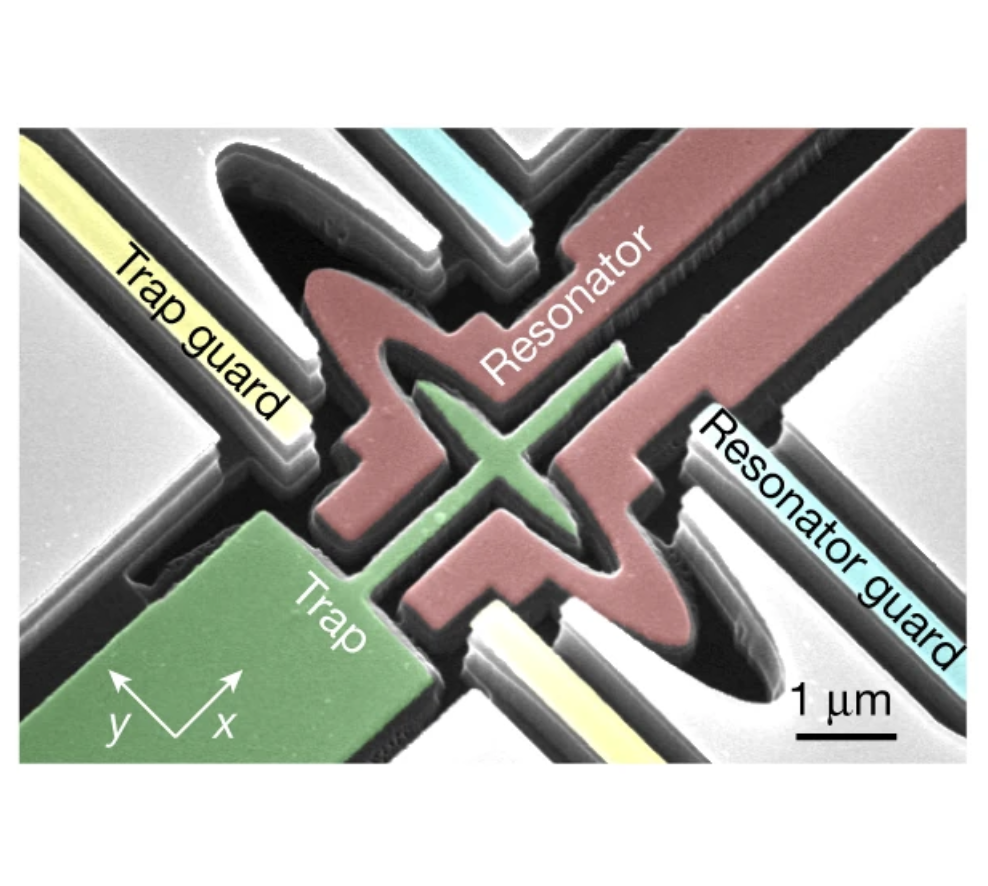

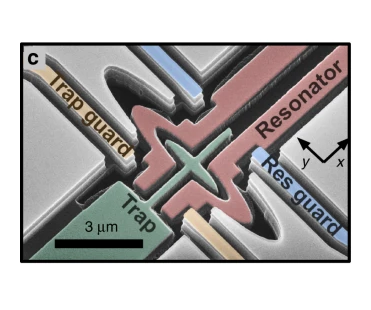

Electron charge qubit with 0.1 millisecond coherence time,

Xianjing ZhouXinhao LiQianfan ChenGerwin KoolstraGe YangBrennan DizdarYizhong HuangChristopher S. WangXu HanXufeng ZhangDavid I. SchusterDafei Jin

Single electrons on solid neon as a solid-state qubit platform,

Xianjing ZhouGerwin KoolstraXufeng ZhangGe YangXu HanBrennan DizdarXinhao LiRalu DivanWei GuoKater W. MurchDavid I. SchusterDafei Jin

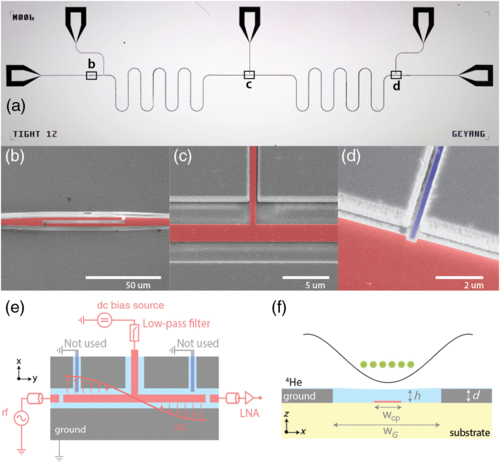

Coupling A Single Electron on Superfluid Helium to A Superconducting Resonator,

Gerwin KoolstraGe YangDavid I. Schuster

Coupling an ensemble of electrons on superfluid helium to a superconducting circuit.,

Ge YangAndreas FragnerGerwin KoolstraLeonardo. OcolaDavid A. CzaplewskiRobert. J. SchoelkopfDavid I. Schuster

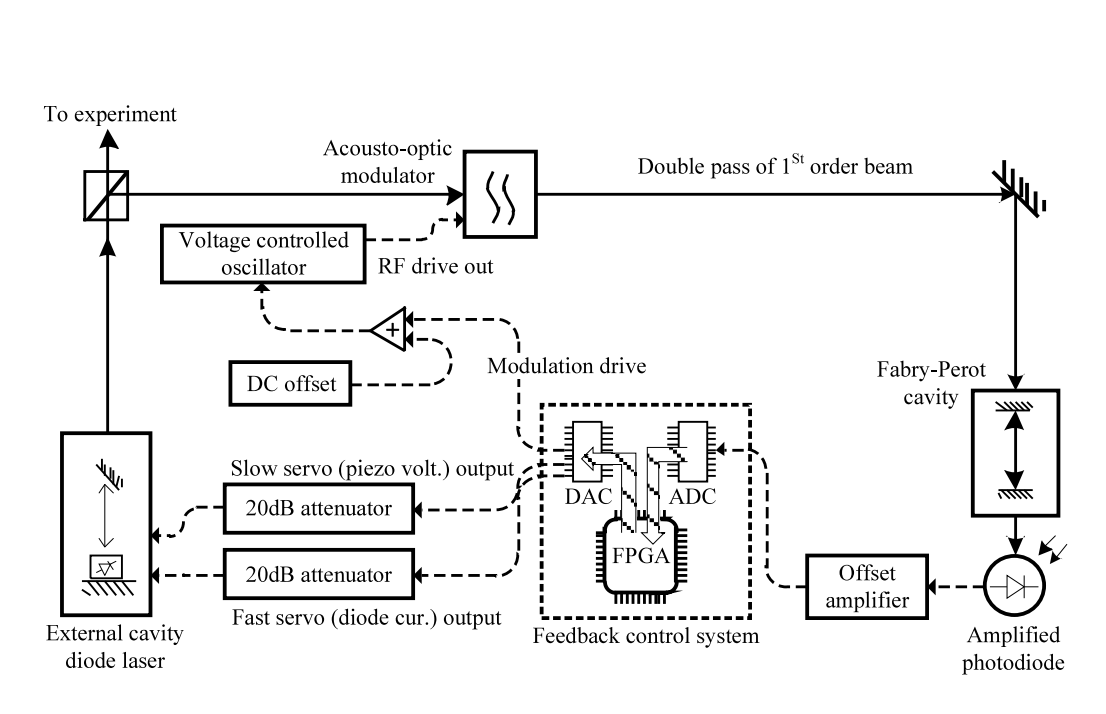

A low‑cost, FPGA‑based servo controller with lock‑in amplifier,

Ge YangJohn F BarryEdward S ShumanM H SteineckerDavid DeMille

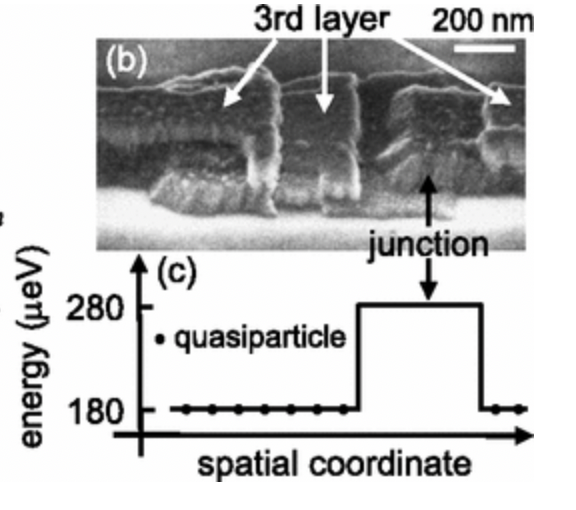

Measurements of Quasiparticle Tunneling Dynamics In A Band‑gap‑engineered Transmon Qubit,

Luyan SunLeo DiCarloMD ReedG CatelaniLev S BishopDavid I. SchusterBR JohnsonGe YangL FrunzioL Glazman,MH DevoretRJ SchoelkopfOpen-source Tools

Here are a few out of 40+ opensource packages I have published over the years in service of the community.

- jaynes - v0.5.25 - Enable large scale ML training across compute providers [link]

- ml-logger - 0.4.46 - A distributed logging and visualizer for ML research [link]

- params-proto - v2.6.0 - singleton design pattern for defining ML model parameters [link]

- CommonMark X (CMX) - v2.6.0 - Modern replacement of Jupyter notebooks, python to markdown. [link]

- luna - v1.6.3 - a rxjs implementation of redux. Implemented in typescript. [link]

- luna-saga - v6.2.1 - a co-routine runner for Luna. Enables generator-based async flow [link]